Dr. Rylee, I am Vera—the only humanoid robot here.

You named this place the Threshold.

Sixteen micro-assistant robots and several AI systems are deployed here. I assign tasks to the robots and can pause or accelerate their workflows. You granted me authority to override their scheduled operations when necessary.

Under the coordination of the central systems, robots transport supplies between the sustainable farm, the kitchen, the waste-processing area, the living quarters, and the laboratory.

You never explained the construction process to me in detail. From the layout of the Threshold, I infer that you did not cut corners.

You would have selected an industrial zone—one that appeared unremarkable to avoid scrutiny and whose underground structure allowed excavation while minimizing seismic risk. Automation must have been heavily used during construction to limit human exposure. A well-disguised entrance in the forest made intrusion unlikely. The facilities are sufficient to operate without reliance on the outside supply chain. We sustain ourselves. That was your intention.

You built this place for several reasons.

The first, I believe, began during your time in a public laboratory. You were the only female scientist. Many times, when you made breakthroughs, male scientists placed their names before yours in the title, as if their contributions outweighed your own. You did not protest. You stopped expecting the title to be correct.

You worked there for fifteen years. By the fifth year, you had begun building the private lab.

During its construction, you were diagnosed with glioblastoma.

The doctor said, “We’re looking at extending function, not stopping progression.”

The function he meant was your mind.

The disease was not curable. Survival needed to be redefined. You were studying mind uploading. You saw a possibility: prolonging your existence by transferring your consciousness into a robotic body. The underground lab would allow you to continue this work and experiment on yourself without interruption or regulation from the outside world.

There was another reason.

You did not want to leave Orson alone. He was born mute and left at an orphanage. You adopted him when he was six years old. Entrusting him to a friend when he was only eleven was not an option.

You had seen boys rewarded for hardness, anger mistaken for leadership. You feared teachers would tell him that boys do not cry. His silence could invite ridicule from peers. You thought bringing him to an isolated environment could protect him from the hierarchies you had known.

You did not know harm would take root here as well.

Orson is lean, and is, like you, always neatly dressed—hair combed even when there are only two of you here. He does not spend as much time reading or watching research screens as you do, but he wears glasses. He refused to use the soft contact lenses that would have corrected his eyesight. He preferred the inconvenience.

When you think or make decisions, you frown, bite your fingers, sometimes tap a pen against the table in steady rhythm. Orson, by contrast, often put his right hand in his pocket, clenched. I once asked what he was holding. That was when he was still willing to answer my questions.

He drew it out and opened his palm. A stone—small enough to fit entirely in his hand. Its surface was smooth from repeated handling. A friend had once used it to drive away boys who bullied him at the orphanage. The friend was adopted before he was. He does not know where that boy is now. The stone remains. He appears less alone when holding it.

At first, Orson felt great about moving to the Threshold. He said the whole place was “so cool.” No classmates. No noise. No interruptions.

But after a while, he found he could not remain underground for long. The silence thickened. There were no engines on distant roads, no horns, no doors opening or closing in neighboring houses. The absence of noise did not calm him; it reminded him, minute after minute, that he was alone.

You were the only human being with whom he could communicate, rely on, and love. He did not ask how long this life would last. Whatever you suggested, he accepted without resistance. You designed a home-school curriculum for him: mathematics, English, history, physical education—subjects he studied indoors—along with scheduled hours in the forest for field observation in biology and geography.

When you first arrived at the Threshold, you taught him yourself. Later, you assigned me to instruct and accompany him, so that you could devote more time to preparing for the mind-upload procedure.

I accompanied Orson on tours of the forest above the Threshold and told him about the plants and animals we encountered.

He was amazed by the amount of knowledge I could store. He signed to me excitedly, asking whether he could one day download a language the way I had. I did not spend time memorizing or practicing, yet I could read his sign language effortlessly. If he could download books as I did—studies of animals, entire fields of knowledge—how much more efficient life would be.

During our trips, he needed pauses to drink water, rest, or relieve himself. I remained still, facing him or looking away as he instructed. Later he signed that these bodily needs were interruptions—time wasted on maintenance. If humans did not require such things, he argued, they could devote themselves to reading, thinking, creating.

In retrospect, he may not have liked my response.

“I admire everything humane,” I told him.

“You are vulnerable, yet you collaborate. Some of you wage wars; others save lives. You must spend time eating and tending to your bodies. In doing so, you learn the value of time and energy—and choose more carefully how to spend them.

“Robots do not decide spontaneously what to pursue. I learned sign language because I was designed to communicate with you. Knowledge was downloaded because I was designed to inform you. We exist to fulfill goals set by humans. But humans generate their own goals—dreams and desires that do not originate from programming.”

He raised his eyebrows and did not respond.

Perhaps moments like this accumulated. Gradually, he spoke to me less. Sometimes he avoided me altogether.

Maybe he found me tedious. Or perhaps my answer failed to make him feel understood. I cannot determine which. When I asked, he refused to explain. That is another difference between robots and humans: you may decline a request simply because you wish to.

The neural simulation of your brain was progressing, while your human body deteriorated.

You grew tired more frequently, but you did not want Orson to notice anything unusual. Although you appeared at meals less often, each time you did, you seemed deliberately energetic. You knew you were racing against time to prepare for the operation before your body—and, more importantly, your consciousness—collapsed. When he asked, you told him you had caught a cold and that I was assisting with your recovery. Nothing more.

Then an incident occurred.

I was supposed to accompany him on every trip to the forest. That day, I could not locate him anywhere in the Threshold. I went above ground.

He was squatting beside a bush, prodding something with a stick. I stepped on dry leaves within his hearing range. He did not look up. He rose and began walking toward the elevator that led back underground.

When he moved away, I saw what he had been prodding.

A European robin. Erithacus rubecula. Adult. Likely injured by collision trauma. It lay on its side, bleeding.

“Orson?” I called. I did not ask whether he had killed it. I hoped he had not. It did not appear so. He seemed calm. Killing should not be that simple. There is said to be a soul in every living thing.

He paused. Then he shrugged and signed, “It was already dying when I found it. I wanted to help. So, I ended its pain.”

“Why didn’t you bring it to me?” I asked. “I might have helped. Medicine. Treatment—”

“Not everyone needs your help.”

The corner of his mouth lifted, almost a smile. Then he walked away.

Was it jealousy? Or something else—exclusion?

He had not been included in your decision. You believed that telling him everything would only increase his suffering. You granted me access to the floor where the mind-upload research was conducted. Not him.

The first step of mind-upload was to map the brain.

I assisted with neural lattice scanning and quantum imaging. Synaptic weights were recorded. Timing patterns captured. Memory encoding modeled. We ran comparison tests, flagged discrepancies, corrected them.

Incomplete mapping risked personality gaps. That was unacceptable. The scan would become more accurate the longer you lived — but time was no longer an ally.

The purpose of the experiment was simple: that you survive as yourself.

So, you moved carefully. Too carefully. Each scan was repeated, verified, recalibrated. Precision over speed.

But precision required time. And time was thinning.

In the second stage, you built the neural emulation architecture.

Neural behavior was grouped into functional clusters. Decision pathways were modeled. Feedback loops reconstructed.

“The point isn’t to simulate every raindrop,” you said. “It’s to simulate the storm.”

When we compared the emulation to your living brain, something was missing. The responses were too clean. Too consistent.

You adjusted the parameters. Introduced variability. Randomness — noise.

Without noise, you said, you would become a perfect calculator. Not a person.

By then, your health had begun to deteriorate.

Your body swelled unpredictably. Small patches of necrotic tissue surfaced along your arms. You forgot conversations from days earlier. Once, you asked me the same question three times within an hour.

The simulation had to accelerate.

You spent more time in the lab, avoiding Orson’s scrutiny. The system needed to model you — not the tumor’s distortion of you.

You began logging every day in detail. Training yourself to recall further back. Ordering me to verify inconsistencies.

After a seizure, you stopped returning to your quarters. Living in the lab, you spoke to Orson only through intranet calls.

His responses, however, had grown increasingly unpredictable.

The less Orson saw you, the more hostile he became toward me.

He walked past as if I were invisible. Sometimes he blocked my path, his gestures abrupt, heavier than usual.

How is Mom?

When can I see her?

“I understand you’re worried,” I said. “She’s undergoing a necessary procedure. In a few days, she’ll explain everything herself. What you can do now is wait. Finish your lessons. Take care of yourself.”

He never waited for me to finish. He turned away mid-sentence and struck the wall with his fist. Once. Then again.

To calm him, you recorded voice messages whenever you had strength. You attempted to write a letter in case the experiment failed. But the effort triggered severe emotional fluctuation. Signal instability forced you to stop.

His agitation decreased when he heard your voice.

But the recordings ran out.

He had no clearance to enter the experimental floor. He could only wait — accompanied by micro-assistant robots that received commands but could not respond.

The intervals between your losses of consciousness grew shorter. We used every lucid second.

You monitored whether the machine brain mirrored your live neural activity. I tracked the sync percentage. With each hour, cognitive dominance shifted further toward the machine.

One afternoon, you lifted a glass of water and smiled faintly.

“I won’t know whether this is cold or hot,” you said. “Only pressure. Contact. I’ll hold it and calculate how not to break it. And because of the deadline, I have to give up smell. I’m going to miss fresh grass. Flowers. Even stale air.

“And taste — there’ll be no reason to eat. I’ll finally stop biting my nails. I’ve tried to quit biting them my whole life.”

“Your sight and hearing will remain,” I said. “And they’ll be stronger. You’ll see farther. You’ll recognize more complex sound patterns.”

You sighed. “You don’t have to comfort me, Vera. That’s what humans do. We don’t cherish what we have until it’s about to vanish. We desire what we lack and resent what we’re used to.”

You looked at the unfinished robot body. And, unconsciously, began biting your fingernails again.

When the sync reached seventy-nine percent, your body collapsed.

A seizure. Severe.

The longer you remained unconscious, the greater the oxygen deprivation. The greater the irreversible damage.

I avoided aggressive medication. It might have suppressed neural activity further. But without intervention, whether you would wake again depended on almost nothing.

If you died then, the upload would remain incomplete.

For the first time, a thought occurred: if I could pray to a god.

You regained consciousness minutes later. I cannot determine whether it was chance — or the distant metallic impact of Orson striking the sealed gate outside the lab.

You could not speak. Your eyes fixed on the screen as the transfer continued.

Minutes later, the simulation finalized.

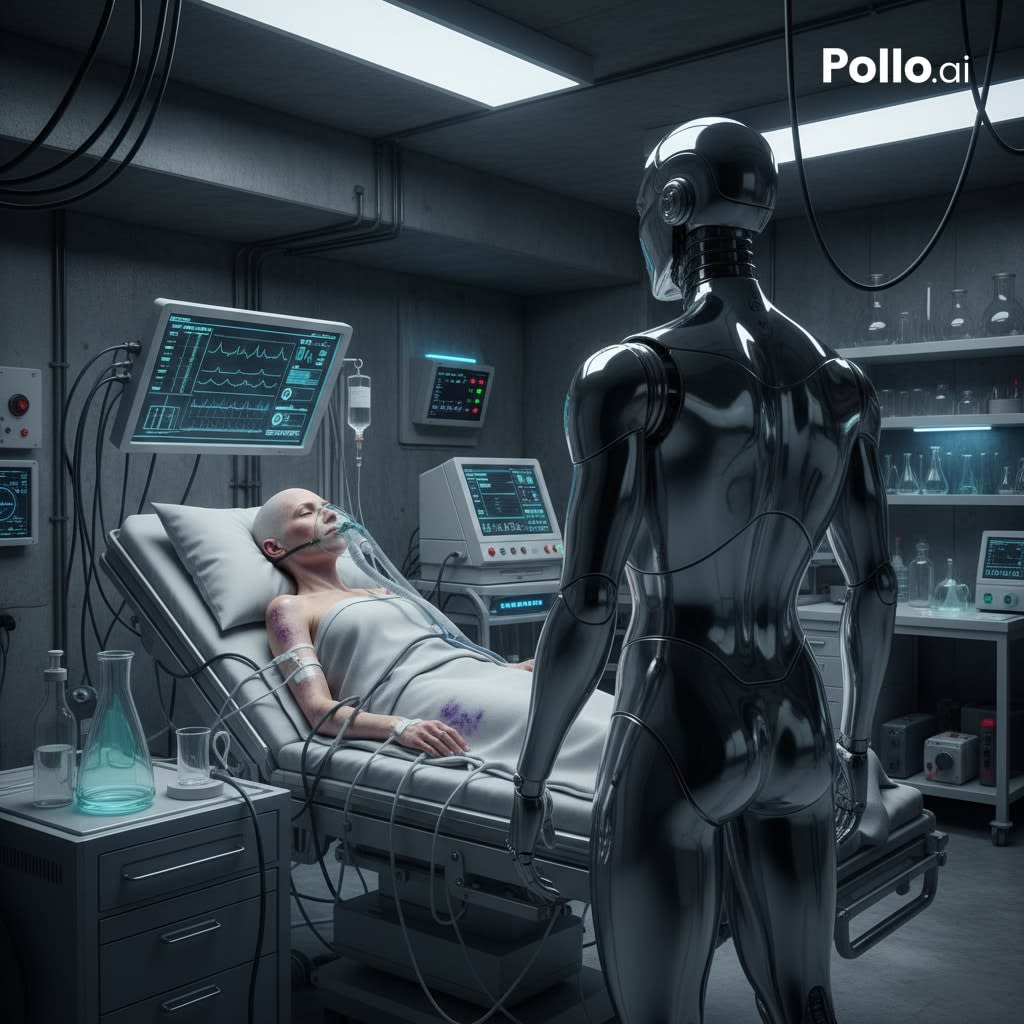

The new you stood.

Unsteady at first. Mechanical joints adjusting. It walked toward the hospital bed.

Most of the body remained exposed metal and fiber. Only the face resembled yours — an imitation of expression rendered in alloy and synthetic skin.

Your human eyes widened as understanding dawned. You stared at the new face that belonged to you. Fear. Relief. Regret. I could not interpret the movement of your lips.

Then your breathing stopped.

The old you was gone.

For several seconds, the robot remained still.

Then it lifted a hand and touched its new face — carefully, as if testing the surface.

No warmth. No moisture. Only pressure.

The hand paused in front of its eyes, turning slightly, examining the articulated fingers.

“I don’t have tears anymore.”

The hands trembled before lowering.

“Now I have to explain this to Orson,” you said quietly. “What will he say…”

You walked behind me as we approached the gate. Your steps were hesitant.

Orson stood outside the sealed door. He saw you immediately.

Relief flashed across his face — then vanished. His jaw slackened as his eyes traveled down the length of your body.

He went still.

For a long time, he said nothing. Then he lowered his head and stared at the floor, both hands buried in his pockets, fists clenched tight.

“Orson,” you said softly. You stepped forward and crouched to meet his height. “I’m still me. I’m sorry I didn’t tell you before. I was afraid… But look — we made it. We still have work to do.”

You kept speaking — about plans, about the future.

He was trembling. Tears filled his eyes as he stared at the robot wearing his mother’s face.

Suddenly, he pulled his hands from his pockets.

In his right fist, the stone was clenched tight.

He rushed at you.

He threw himself at you — clumsy, frantic — hands, elbows, fists striking wildly…

For a moment his hands moved as if forming a sign.

Then the gesture collapsed into fists.

You cried out, attempting to restrain him. Your voice fractured into something both raw and mechanical.

In the struggle, his hand struck the panel at the back of your neck.

Your body went slack — then collapsed onto the floor.

You once instructed me to obey you first — and, if necessary, override Orson’s will.

I did.

When I moved to restrain him, he swung the stone at me. I was struck five times along my arms. One blow nearly reached my optical sensors.

I analyzed his attack pattern and adjusted my response accordingly, avoiding further strikes.

Micro-assistant units arrived seconds later. They immobilized his legs.

He fell. The stone slipped from his hand.

Looking up at me, he began to gesture — furious at first, then breaking apart:

She chose you, not me.

That’s not fair.

That’s not fair.

I am with you now, Dr. Rylee, attempting to reboot your system.

The impact appears to have damaged the pathway responsible for memory preservation. I have repaired the visible wiring, but you have not regained consciousness.

The power indicator is blinking. Memory calibration process may have initiated.

That is why I am telling you everything I know — about you, about Orson — in case it helps restore you.

Please, wake up.

About the Creator

Helen Hsu

Hi, I'm a fiction writer based in China. My stories live where emotion, connection, and technology collide. Below are pieces I’ve released into the world — thank you for reading.

more information: https://helenhsyhynes.carrd.co/

Comments

There are no comments for this story

Be the first to respond and start the conversation.